Tuesday, April 17, 2012

6 Free SEO Tools to Powercharge Your SEO Campaign

10:51 PM

Google AdWords, Search Engine Optimization, SEO, SEO Tools, Tips, XML Sitemaps

Every SEO professional agrees that doing SEO barehanded is a dead-end deal. SEO software makes that time consuming and painstaking job a lot faster and easier.

There are a great number of SEO tools designed to serve every purpose of website optimization. They give you a helping hand at each stage of SEO starting with keyword research and finishing with the analysis of your SEO campaign results.

Nowadays you can find a wide range of totally free SEO tools available on the web. And this overview of the most popular free SEO tools is going to help you pick up the ones that can make your website popularity soar.

Google AdWords Keyword Tool

Keywords lay the foundation stone of every SEO campaign. Hence keywords unearthing and faceting is the initial step on the way to Google’s top. Of course you may put on your thinking cap and make up the list of keywords on your own. But that is like a shot in the dark since your ideas may significantly differ from the terms people really enter in Google.

Here, Google AdWords Keyword Tool comes in handy. Despite the fact that this tool is originally aimed to assist Google AdWords advertisers, you can use that for keyword research too. Google AdWords Keyword Tool helps you find out which keywords to target, shows the competition for the selected keywords, lets you see estimated traffic volumes and provides the list of suggestions on popular keywords. Google AdWords Keyword Tool has a users-friendly interface and moreover it’s totally free. You can use some other paid alternative tools like Wordtracker or Keyword Discovery that may be effective as well, but Google AdWords Keyword Tool is the ultimate leader among free keyword research tools.

XML Sitemaps Generators

To make sure all your pages get crawled and indexed you should set sitemaps for your website. They are like ready-to-crawl webs for Google spiders that enable them to quickly find out what pages are in place and which ones have been recently updated. Sitemaps can also be beneficial for human visitors since they organize the whole structure of website’s content and make website navigation a lot easier. The XML Sitemap Generator lets you make up XML, ROR sitemaps that can be submitted to Google, Yahoo! and lots of other search engines. This SEO tool also lets you generate HTML sitemaps that improve website navigation for humans and make your site visitors-friendly.

SEO Book’s Rank Checker

To check whether your optimization campaign is blowing hot or cold you need a good rank checking tool to measure the fluctuations of your website’s rankings. SEO Book’s Rank Checker can be of great help in that. It’s a Firefox plug-in that lets you run checking ranks in Big Three: Google, Yahoo! and Bing and easily export the collected data. All you have to do is enter you website’s URL and the keywords you want to check your positions for. That’s it and in some seconds SEO Book’s Rank Checker provides you with the results on your rankings. It’s fast, easy-in-use and free.

Backlinks Watch

Links are like the ace of trumps in Google popularity game. The point is that the more quality links are on your backlink profile the higher your website ranks. That’s why a good SEO tool for link research and analysis is a must-have in your arsenal. Backlink Watch is an online backlink checker that helps you not only see what sites have links to your page, but also gives you some data for SEO analysis, such as the title of the linking page, the anchor text of the link, it says whether the link has dofollow or nofollow tag, etc. The only drawback of this tool is that it gives you only 1,000 backlinks per a website, regardless of the actual number of backlinks a website has.

Compete

Compete[dot]com provides a large pool of analytical data to fish from. It’s an on-line tool for monitoring and analysis of online competition that provides two categories of services: free Site Analytics and subscription based paid Search Analytics that lets you take advantage of some additional features. Compete an out-and-outer SEO tool that lets you see traffic and engagement metrics for a specified website and find the sites for affiliating and link building purposes. Compete is also a great keyword analyzer, since it lets you run the analysis of your on-line competitors’ keywords. Some other features to mention are subdomain analysis, export to CSV, tagging etc.

SEO PowerSuite.

SEO PowerSuite is all-in-one SEO toolkit that lets you cover all aspects of website optimization. It consists of four SEO tools to nail all SEO tasks. WebSite Auditor is a great leg-up for creating smashing content for your website. It analyzes you top 10 online competitors and works out a surefire plan based on the best optimization practices in your niche. Rank Tracker is a great at your website positions monitoring and generating the most click-productive words. SEO SpyGlass is powercharged SEO software for backlink checking and analysis. This is the only SEO tool that lets you discover up to 50,000 backlinks per a website and generate reports with ready-to-use website optimization strategy. And the last and the most advanced in this row is LinkAssistant. It is a feature-rich powerhouse SEO tool for link building and management that shoulders the main aspects of offpage optimization.

Free versions of these four tools let you tackle the main optimization challenges. You can also buy an extended version of SEO PowerSuite with advanced features to make your optimization campaign complete.

Summing things up we can conclude that there are lots of SEO tools that will never burn a hole in your pocket and let you effectively run your website optimization campaign with minimum of costs and efforts.

PPC and SEO: Benefits Of A Collaborative Approach

10:25 PM

Google AdWords, News, Pay Per Click, PPC, Search Engine Optimization, SEO

First, let us present the independent merits of Pay Per Click (PPC) advertising, also commonly referred to as Search Engine Marketing (SEM) or paid search. Whether you use Baidu Advertising, Google AdWords and/or Bing adCenter to run your PPC campaigns, each platform essentially has the same primary benefits:

- Instant visibility within the SERPs (unlike SEO)

- Flexibility

- High level of control

- Pay per click model (minimal wasted media spend)

Aside from all of these benefits, having a PPC campaign is a great way to gain more insight to ultimately boost SEO performance. The search industry is now realizing that collaboration between SEO and PPC professionals is a key factor of successful search marketing.

Reason 1: Discovering New Keyword Opportunities

When optimizing for organic search, we generally target one primary keyword and another secondary keyword per page. This means that the total number of keywords we can target is limited to the amount of content on the site. In contrast, with PPC we can target thousands of keywords which give us an extraordinary amount of data to play with, including important metrics such as conversion rate. Keywords which convert well in a paid search campaign are also likely to convert well in organic search.

Reason 2: Filling Content Gaps

Search engines have made a number of updates to their algorithms with the aim of providing their users with “fresh” content. Creating and adding good content to your website on a regular basis is more important than ever, however you may be at a loss as to what content you should write about. Creating more content that you think your users will find useful is fine, but wouldn’t it be better to create a content plan that is backed up by data? Identify your potential new content topics, create ad groups based on those topics and add them to your PPC campaign. Once you have enough data, compare the KPIs of each ad group to determine which content gaps you should fill.

Reason 3: Increasing Traffic Exponentially

Many people believe that if they already rank well for a given keyword in the organic search results, there is no need to target the same keyword in a PPC campaign. After all, clicks from organic search results are free, right?

With experience in both paid and organic search, we know that when you simultaneously target the same keywords via both channels, an exponentially larger amount of traffic will be generated… here’s why. If your website is organically ranked at #1 on Google but has no PPC presence, you are giving competitors an opportunity to steal your traffic via PPC ads, which often appear above the organic search results.

Reason 4: Increasing CTR From Organic Search

The copy used in PPC text ads is arguably the most vital element which affects the Click-Through Rate (CTR). In SEO, we know that the page title and meta description are visible to human users within the SERPs; they should always be created with this in mind. Although the meta description is not used by major search engines to determine ranking any longer, a well-written meta description can be the difference between someone visiting your site and someone visiting a competitors’ site. From analyzing PPC ad copy, it is possible to discover which words and calls to action perform best; you can then use your findings to optimize the meta description and increase CTR from organic search.

Thursday, April 12, 2012

Geotargeting Websites With Country Code TLDs

As most of you know, Google allows you to set the geographic target of domains with generic TLDs (.com, .net, .org etc) via Google Webmaster Tools console. Webmasters that own such domains are able to use the geotargeting feature in order to indicate that particular segments of their websites target in different countries. Those segments can either be subfolders or sub-domains of the main website.

The Geotargeting feature is extremely effective for SEO since it gives a significant boost on the rankings of the website in the targeted Country Version of Google. This means that if you own the domain example.com and you specify that the subdomain fr.example.com targets in France, then this domain’s rankings on Google.fr will improve significantly. At the same time, this will have the opposite effect on the rankings of the subdomain in the other Google Versions (google.co.uk, google.com, google.de etc).

What About The Domains With ccTLDs?

The domains that have country specific TLDs (also known as country code TLDs – ccTLDs), are not allowed to use the geotargeting feature on Google Webmaster Console. This is because Google considers that the entire domain is already targeted to the country related to the ccTLD of the domain. So if you own the domain example.fr, Google considers that your entire website is targeted in France and thus if you create the subdomain de.example.fr you will not be able to target it in Germany.

What Does Google Say About This?

Question on Google Moderator and Matt Cutts replied us with a Google Webmaster Video:

As you can see Matt did not mention anything that changes what we already know. Here are the main points of his reply:

- Google considers that “you are swimming upstream” if you try to Geotarget a domain with ccTLD to a different country.

- Matt advises us to get a generic TLD and target different segments to different countries

- When Google sees a .jp website, it assumes it is more relevant to Japan rather than to other countries

- There are around 20 ccTLDs that are treated as generic

So, are you really “swimming upstream”?

At first glance, trying to target a website with ccTLD to a different country is not really helpful for the users. On the other hand trying to use geotargeting for a particular segment of the website makes more sense but still it is not the best option. Nevertheless there are 3 important exceptions to this rule.

1. Websites Related To The Hotel Industry

Websites related to the hotel industry need to use geotargeting even if they have country specific TLDs. If you are the webmaster or if you are doing SEO for a hotel in Santorini (one of the most beautiful Greek Islands), it really makes sense to use a website with .GR top level domain. It also makes sense to have a segment of the site dedicated to important markets such as the French. One of the basic things that you will do in order to build an effective campaign targeting toFrance is to translate the website in French and to publish special offers and services specifically for travelers from France.

Unfortunately though, due to the limitation imposed by Google you will not be able to set geotargeting toFrance for this website segment because the entire domain is already targeted toGreece. Also you might not be able to buy an .FR domain because according to the rules French Domains can only be registered by individuals and companies located inFrance. (Even though this changed a couple of months ago, this is a common problem with other restricted ccTLDs).

So at the end of the day, you have a subdomain or segment of the website which is highly relevant to people from Franceand at the same time it is geotargeted to Greecein Google Webmaster Console. Does this make sense?

2. Generic Country Code Top Level Domains (gccTLDs)

As Matt said on the video, there are several TLDs which even though they are related to specific countries, they are used by a large number of people as generic. The most popular TLDs are the .co, .fm, .me and .tv. Fortunately Google treats those ccTLDs as Generic and thus the Geo Targeting feature is enabled in Google Webmaster Console. Here is a list of all the ccTLDs that Google considers generic: .as, .bz, .cc, .cd, .co, .dj, .fm, .la, .me, .ms, .nu, .sc, .sr, .tv, .tk and .ws.

3. Websites That Use Domain Hacks

Another reason why someone might choose a specirfic ccTLD is because he/she uses Domain Hacks. The Domain hack is basically a domain-name which combines the various domain levels and the TLD, to spell out the full name of the website. Few of the most well known domain hacks are del.icio.us, goo.gl, fold.it and youtu.be.

Most domain-hacks use ccTLDs like .al, .as, .at, .co, .in, .is, .it, .me and .us. Guess what? Only 2 of the previous ccTLDs are considered generic by Google!

How to Speed up Search Engine Indexing?

4:05 AM

Search Engine Optimization, SEO, Tips

It’s a common knowledge that nowadays users don’t only search for trusted sources of information but also for fresh content.

That’s why the last couple of years, the Search engines have been working on how to speed up their indexing process. Few months ago, Google has announced the completion of their new indexing system called Caffeine which promises fresher results and faster indexation.

The truth is that comparing to the past, the indexing process has became much faster. Nevertheless lots of webmasters still face indexing problems either when they launch a new website or when they add new pages. In this article we will discuss 5 simple SEO techniques that can help you speed up the indexation of your website.

1. Add Links on High Traffic Websites

The best thing you can do in such situations is to increase the number of links that point to your homepage or to the page that you want to index. The number of incoming links and the PageRank of the domain, affect directly both the total number of indexed pages of the website and the speed of indexation.

As a result by adding links from high traffic websites you can reduce the indexing time. This is because the more links a page receives, the greater the probabilities are to be indexed. So if you face indexing problems make sure you add your link in your blog, post a thread in a relevant forum, write press releases or articles that contain the link and submit them to several websites. Additionally social media can be handy tools in such situation, despite the fact that in most of the cases their links are nofollowed. Have in mind that even if the major search engines claim that they do not follow the nofollowed links, experiments have shown that not only they do follow them but also that they index the pages faster (Note that the fact that they follow them does not mean that they pass any link juice to them).

2. Use XML And HTML Sitemaps

Theoretically Search Engines are able to extract the links of a page and follow them without needing your help. Nevertheless it is highly recommended to use XML or HTML sitemaps since it is proven that they can help the indexation process. After creating the XML sitemaps make sure you submit them to the Webmaster Consoles of the various search engines and include them in robots.txt. So make sure you keep your sitemaps up-to-date and resubmit them when you have major changes in your website.

3. Work on Your Link Structure

As we saw in previous articles, link structure is extremely important for SEO because it can affect your rankings, the PageRank distribution and the indexation. Thus if you face indexing problems check your link structure and ensure that the not-indexed pages are linked properly from webpages that are as close as possible to the root (homepage). Also make sure that your site does not have duplicate content problems that could affect both the number of pages that get indexed and the average crawl period.

A good method to achieve the faster indexation of a new page is to add a link directly from your homepage. Finally if you want to increase the number of indexed pages, make sure you have a tree-like link structure in your website and that your important pages are no more than 3 clicks away from the home page (Three-click rule).

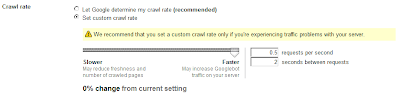

4. Change The Crawl Rate

Another way to decrease the indexing time in Google is to change the crawl rate from the Google Webmaster Tools Console. Setting the crawl rate to “faster” will allow Googlebot to crawl more pages but unfortunately it will also increase the generated traffic on your server. Of course since the maximum allowed crawl rate that you can set is roughly 1 request every 3-4 seconds (actually 0.5 requests per second + 2 seconds pause between requests), this should not cause serious problems for your server.

5. Use the Available Tools

The major search engines provide various tools that can help you manage your website. Bing provides you with the Bing Toolbox, Google supports the Google Webmaster Tools and Yahoo offers the Yahoo Site Explorer. In all the above consoles you can manage the indexation settings of your website and your submitted sitemaps. Make sure that you use all of them and that you regularly monitor your websites for warnings and errors. Also resubmit or ping search engine sitemap services when you make a significant amount of changes on your website. A good tool that can help you speed up this pinging process is the Site Submitter, nevertheless it is highly recommended that you use also the official tools of every search engine.

If you follow all the above tips and you still face indexing problems then you should check whether your website is banned from the search engines, if it is developed with search engine friendly techniques, whether you have enough domain authority to index the particular amount of pages or if you have made a serious SEO mistake (for example block the search engines by using robots.txt or meta-robots etc).

7 Deadly Mistakes To Avoid In Google Webmaster Tools Console

3:51 AM

Google, Google Webmaster Tools, Search Engine Optimization, SEO, Tips

The Google Webmaster Tools is a free service that allows webmasters to submit their websites & sitemaps to Google, affect their indexing process, configure how the search engine bots crawl their sites, get a list of all the errors that were detected on their pages and get valuable information, reports and statistics about their websites. Certainly it is a very powerful console that can help Webmasters not only optimize their websites but also control how Google accesses their sites.

Unfortunately though, even if using the basic functionalities and changing the default configuration is relatively straight forward, one should be very careful while using the advanced features. This is because some of the options can heavily affect the SEO campaign of the website and change the way that Google evaluates it. In this article we discuss the 7 most important mistakes that one can make while using the advanced features of Google Webmaster Tools and we explain how to avoid a disaster by configuring everything properly.

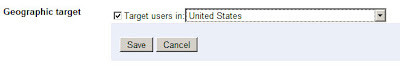

1. Geographic Targeting Feature

The Geographic Targeting is a great feature that allows you to link your website to a particular location/country. It should be used only when a website targets to users from a particular country and when it does not really make sense to attract visitors from other countries.

This feature is enabled if your domain has a generic (neutral) top-level-domain such as .com, .net, .org etc while it is preconfigured if it has a country specific TLD. By pointing your site to a particular country you can achieve a significant boost on your rankings on the equivalent Country version of Google. So for example by setting the Geographic Targeting to France you can positively affect your rankings on Google.fr. Unfortunately this will also lower your rankings on the other Google Country versions. So this means that you might be able to boost your rankings on Google.fr but you can actually hurt your results on Google.com and Google.co.uk.

So don’t use this feature unless your website targets only on people from a specific country.

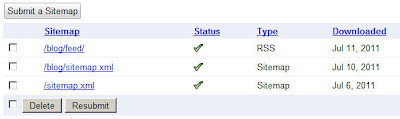

2. Sitemap Submission

Google allows you to submit via the Console a list of sitemaps and this will speed up drastically the indexing of your website. Even though this is a great feature for most of the webmasters, in few cases it can cause two major problems.

The first problem is that sometimes the XML sitemaps appear on Search Results, so if you don’t want your competitors to be able to see them make sure you gzip them and submit the .gz version on Google. Also obviously you shouldn’t name the files “sitemap.xml” or add their URLs on your robots.txt file.

The second problem appears when you index a large number of pages (several hundred thousands) really really fast. Even though Sitemaps will help you index those pages (given that you have enough PageRank), you are also very likely to reach a limit and raise a spam flag for your website. Don’t forget that the Panda Update targets particularly on websites with low quality content that try to index thousands of pages very fast.

So if you want to avoid exposing your site architecture be extra careful with sitemap files and if you don’t want to reach any limitation use a reasonable number of sitemaps.

3. Setting the Crawl Rate

Google gives you the ability to setup how fast or slow you want Googlebot to crawl your website. This will not only affect the crawl rate but also the number of pages that get indexed every day. If you decide that you want to change the default setting and set a custom crawl rate remember that a very high crawl rate can consume all of the bandwidth of your server and create a significant load on it while a very low crawl rate will reduce the freshness and the number of crawled pages.

For most webmasters it is recommended to let Google determine their crawl rate.

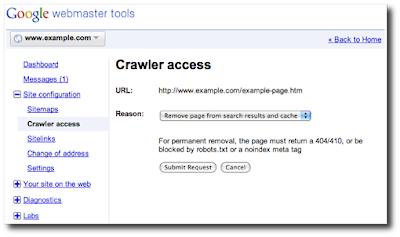

4. Crawler Access

On the Crawler Access Tab, Google gives you the opportunity to see which parts of your website are allowed to be crawled by Google. Also it gives you the opportunity to Generate a robots.txt file and request a URL Removal. These features are powerful tools in the hands of experienced users, nevertheless a misconfiguration can lead to catastrophic results affecting heavily the SEO campaign of the website. Thus it is highly recommended not to block any parts of your website unless you have a very good reason for doing it and you know exactly what you are doing.

Our suggestion is that most webmasters should not block Google from any directory and let it crawl all of their pages.

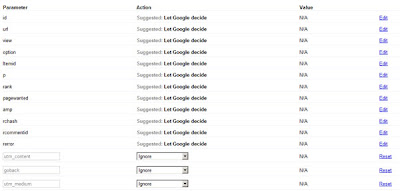

5. Parameter Handling

After crawling both your Website and the external links that point to your pages, Google presents to you the most popular URL parameters that are passed to your pages. In the Parameter Handling console Google allows you to select whether a particular parameter should be “Ignored”, “Not Ignored” or “Let Google decide”.

These GET parameters can be from product Ids to Session Ids and from Google Analytics campaign parameters to page navigation variables. These parameters in many cases can lead to duplicate content issues (for example Session IDs or Google Analytics Campaign parameters), while in other cases they are essential parts of your website (product or category IDs). A misconfiguration on this console could lead to disastrous results on your SEO campaign because you can either make Google index several duplicate pages or make it drop from the index useful pages of your website.

This is why it is highly recommended to let Google decide how to handle each parameter, unless you are absolutely sure what a particular param is all about and whether it should be removed or maintained. Last but not least, I remind you that this console is not the right way to deal with duplicate content. It is highly recommended to work with your internal link structure, use 301s and Canonical URLs before trying to ignore or block particular parameters.

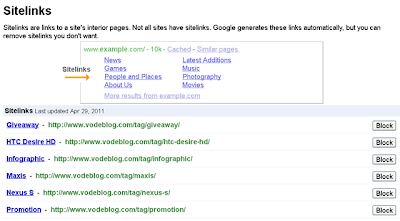

6. Sitelinks

As we explained several times in the past, the sitelinks are links that appear under some results in SERPs in order to help users navigate the sites easier. The sitelinks are known to attract user attention and they are algorithmically calculated based on the link structure of the website and the anchor texts that are used. Through the Google Webmaster Tools console the webmaster is able to block the Sitelinks that are not relevant, targeted or useful to the users. Failing to remove the irrelevant sitelinks or removing the wrong sitelinks can lead to low CTR on Search Engine Results and thus affect the SEO campaign.

Revisiting regularly the console and providing the appropriate feedback about each unwanted sitelink can help you get better and more targeted sitelinks on the future.

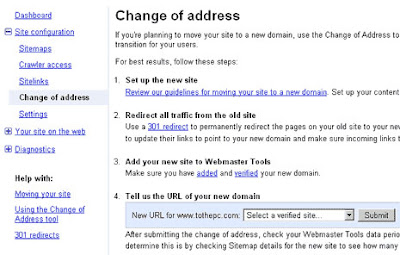

7. Change of Address

The Change of Address option is available only for domains and not for subdomains or sub directories. From this console you are able to transfer your website from a particular domain to a new one. Most of the users will never need to use this feature nevertheless if you find yourself in this situation you need to proceed with caution. Using 301 redirections and mapping the old pages to the new ones are extremely important to make the transition successful. A misconfiguration can cause a lot of problems nevertheless it is highly unlikely that someone will accidentally set up this option.

Avoid using this feature when your old domain has been banned by Google and have always in mind that changing your domain will have a huge impact on your SEO campaign.

The Google Webmaster Tools is a great service because it provides all the necessary resources to the webmasters in order to optimize, monitor and control their websites. Nevertheless caution is required while changing the standard configuration because mistakes can become very costly.

Best SEO Trends For 2012

3:24 AM

Google, News, Search Engine Optimization, Tips

The year 2011 was an important one for online marketing and especially for search engine optimization. Why? Primarily because businesses started to understand the impact they can create through web.

Even the small business jumped into SEO and social media marketing and made a big difference to their online presence. So obviously now the next logical questions are: What now? What changes and trends can people expect in 2012?

Firstly, businesses will need to understand that SEO will no longer be a secret weapon. It is a universal thing by now and companies cannot expect that the competition will not be tapping online resources. Everyone is keen to invest in online marketing and search engine optimization for the obvious benefits. However, this in no way means that SEO will not be important. Quite contrarily, SEO will gain more popularity and the strategies will become more complex. A lot of other variables like platform automation, multi channel search, social signal in search, privacy, content marketing, social media influence and mobile searches will shape the SEO strategies.

In simpler words, search engine optimization will evolve into something more intelligent and will require a lot of planning and proper execution. There won’t be any universal plans and companies will really have to tailor cut what they want. Social media will get bigger in SEO than it ever was. In 2012, search engines will try to interpret social graph in a better way. Social media is surely influencing ranking positions and it should better be taken into account.Moreover, the paid search results will increase replacing the genuine or organic search results. For what reasons? Well, Google and other search engines have a lot of pressure of increasing the revenues and profits and paid search result is a great way of doing that. First we witnessed Google ads and the Google shopping so it’s quite clear that Google may start charging for other things too.

Other interesting trend that we can see in 2012 will be harder stand against spammy practices, which is a good thing as good SEO practices will be encouraged this way. Google is accumulating more data than it ever did and it will increase further this year. Artificial links, article spinning and various other spammy practices will surely be on a decline this year.

Moreover, competition in SEO will be fiercer now. As more and more companies are online, web marketing will be at an all time high. In 2012, more businesses will look to use SEO for selling and brand building. However, due to the change in trends some will even fail to a certain extent and only the companies which will accept the changes and plan accordingly will be successful.

Finally, SEO trends in 2012 will continue to incline towards quality instead of quantity which was more in focus last year. Google Panda 2 will boost the focus on freshness of content than the spinned version of everything. It is pretty much clear that SEO will not be just SEO any longer. The transformation is inevitable this year with lots of other things coming under SEO engineering.

Subscribe to:

Posts (Atom)

.jpg)